DBIT

Magazine

Låsesmed Herlev: Værktøj en låsesmed bruger

Følg os Her kommer Låsesmed Herlevs liste på værktøjer en låsesmed bruger; En låsesmed bruger en bred vifte af værktøjer

Online

Online

3 interessante tech investeringer i 2022

4 stik corona og 5 stik corona vil måske overraske dig, eller?

Få lynhurtigt registreret en LEI-kode

Låsesmed Herlev: Værktøj en låsesmed bruger

Følg os Her kommer Låsesmed Herlevs liste på værktøjer en låsesmed bruger; En låsesmed bruger en bred vifte af værktøjer

Online

Online

Låsesmed Herlev: Værktøj en låsesmed bruger

Hvad koster låsesmede i København, f.eks. på Østerbro?

Hvilke egenskaber har hvid skimmelsvamp?

Online

Låsesmed Herlev: Værktøj en låsesmed bruger

Følg os Her kommer Låsesmed Herlevs liste på værktøjer en låsesmed bruger; En låsesmed bruger en bred vifte af værktøjer

En perfekt løsning til erhvervsdrivende

Hvad koster en fodboldrejse? Tips; find billige biletter online

Hvad er TikTok?

Underholdning

Underholdning

Underholdning

Gør bilsyn lidt nemmere – ryk det tæt på

Sådan finder du effektivt mistede nøgler

Hvordan kopierer jeg billeder i Photoshop

Låsesmed Herlev: Værktøj en låsesmed bruger

Låsesmed Herlev: Værktøj en låsesmed bruger

Låsesmed Herlev: Værktøj en låsesmed bruger

Hvordan kopierer jeg billeder i Photoshop

Bedste Netflix filmer vinter 2020

Låsesmed Herlev: Værktøj en låsesmed bruger

Nyheder

Nyheder

Nyheder

Låsesmed Herlev: Værktøj en låsesmed bruger

Spar penge med besparende lønsystem

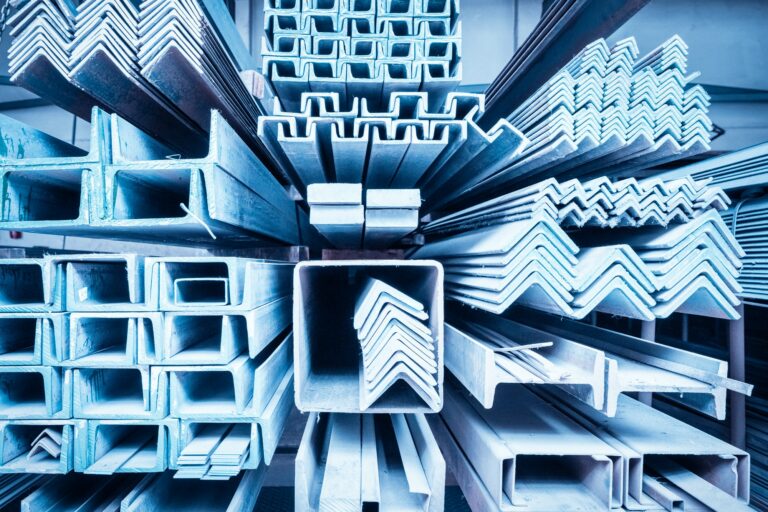

Skiftende priser på byggematerialer

Almenviden

Almenviden

Almenviden

ADK- Adgangskontrol

Låsesmed Herlev: Værktøj en låsesmed bruger

Følg os Her kommer Låsesmed Herlevs liste på værktøjer en låsesmed bruger; En låsesmed bruger en bred vifte af værktøjer

Gør bilsyn lidt nemmere – ryk det tæt på

Spar penge med besparende lønsystem

Hvad kan jeg gøre, hvis jeg føler mig stresset?

Artikler

Artikler

Artikler

Bedste Netflix filmer vinter 2020

De bedste Netflix filmene vinter 2020, klassiker og nye favoritter

Låsesmed Herlev: Værktøj en låsesmed bruger

Følg os Her kommer Låsesmed Herlevs liste på værktøjer en låsesmed bruger; En låsesmed bruger

Hvad er en lynafleder, overspændingsbeskyttelse og hvordan fungerer den?

Heldigvis kan du beskytte dit hjem med overspændingsbeskyttelse (lynafleder) i hele hjemmet.. Læs om montering af lynafledere her

ADK- Adgangskontrol

Elektronisk adgangskontrol Elektronisk adgangskontrol gør det nemt, hurtigt og sikkert at kontrollere, hvem der må komme ind i en bygning

Sådan aktiverer du hele familien på stranden

Sørg for at teenageren kan bruge sin elektronik på stranden på trods af sand og strand og at hunden føler sig godt underholdt med noget legetøj til stranden

Brug din computere til at finde hjælp til de praktiske opgaver i hjemmet

Hader du at gøre rent, og derfor ønsker hjælp til rengøring? Eller skal du bruge hjælpende hånd til at sætte

Sådan finder du effektivt mistede nøgler

SÅDAN FINDER DU EFFEKTIVT MISTEDE Nøgler Du forlod dine nøgler lige der på bordet, men du kan ikke finde dem,

Lysterapilampe hvad er det?

lysterapilampe, hvad er det? I denne artikel så kan du lære om lysterapi og hvad dette fænomen kan Lysterapilampe Når

Hvad koster en fodboldrejse? Tips; find billige biletter online

hvad koster en fodboldrejse? hvad koster det egentligt at tage på en fodboldrejse? + tips til rejsen Hvad koster det

Hvad er TikTok?

Hvad er tiktok? Voksnes guide til TikTok og hvad TikTok er og kan

15 Google tjenester, du bør kende

Google kan meget mere end hvad hver almindelig dansker ved, her er 15 ting Google kan

Hvad koster låsesmede i København, f.eks. på Østerbro?

Følg os Prisen for låsesmede i København, herunder på Østerbro, kan variere afhængigt af forskellige faktorer, herunder typen af lås,

Hvilke egenskaber har hvid skimmelsvamp?

Hvid skimmelsvamp, ligesom andre typer skimmelsvampe, har forskellige egenskaber, der kan variere afhængigt af den specifikke art eller stamme.

Korrekt affugtning – 11 tips til effektiv brugen af affugter

Følg os For at affugte et rum bedst muligt, kan du bruge affugtere, øge ventilationen og reducere fugtkilder som planter,

Gør bilsyn lidt nemmere – ryk det tæt på

Følg os Skal bilen snart til syn? Måske du har fået indkaldelsen fra Færdselsstyrelsen om, at det nu er på

Få arbejdsdagen til at glide nemmere med dette udstyr

Følg os Som virksomhedsejer bør du sikre dig, at dagligdagen går så glidende som muligt. Diverse udfordringer kan nemlig være

Sådan kan du bruge markedsføring i din virksomhed

Følg os Det er vigtigt at gøre brug af markedsføring, når man har en virksomhed. Især online markedsføring er vigtigt

Få opbygget en sund, digital infrastruktur

Følg os Kunne I godt tænke jer at forbedre jeres infrastruktur, når det kommer til digitale løsninger? Det er vigtigt,

Professionel hjælp til økonomi og regnskab

Følg os Har du en virksomhed, hvor tingene er ved at vokse sig lidt større, end du kan følge med

Seneste

Hvad koster låsesmede i København, f.eks. på Østerbro?

Hjemmeside designere, hvad kendetegner en dygtig webdesigner

Hvad er en lynafleder, overspændingsbeskyttelse og hvordan fungerer den?

Gør bilsyn lidt nemmere – ryk det tæt på

populære

Ska vi kontakte dig

Skriv til os her, så vender vi tilbage til dig hurtigst muligt indenfor et par hverdage